Tag: “Disability”

A Clusive Success Story

Clusive is a free, flexible, adaptive and customizable learning environment. It is a web application for students and teachers that addresses access and learning barriers present in digital and open learning materials.

Introduction to Data Science Tools Resources in the We Count Research Library

Learning data science comes with a steep learning curve, and inaccessible tutorials, resources and tools create barriers. To support a more inclusive environment in the field of data science, We Count has created a resource library.

AI, Fairness and Bias

Dr. Jutta Treviranus participated in the Sight Tech Global 2020 panel "AI, Fairness and Bias" on December 2.

We Count Badges

We Count badges enabled people to showcase their proficiency in the growing fields of AI, data systems and inclusive data practices as well as other skills.

Communication Access within the Accessible Canada Act Reports

Communication Access within the Accessible Canada Act project ended in March of this year with 26 recommendations to improve communication access within federal government services.

Continuing Our Work During COVID-19

A message from IDRC Director Jutta Treviranus about continuing our mission during COVID-19.

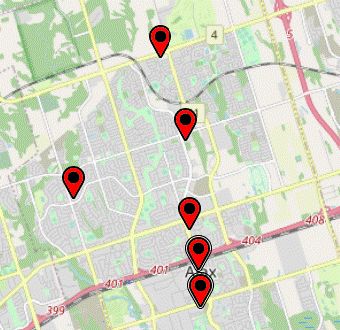

COVID-19 Vaccination Centre Data Monitor: Accessibility Map Demonstration

A COVID-19 data monitor prototype test that was created to show how data gaps can be addressed, providing a way to find accessibility information that is not included in these data sets.

Disability Bias in AI-Powered Hiring Tools Co-Design

In May, we completed our second set of co-design sessions with the Equitable Digital Systems (EDS) project. EDS is a project that explores how to make digital systems more inclusive for persons with disabilities in the workplace.

Ensuring Equitable Outcomes from Automated Hiring Processes: An Update

For this article, Antranig is considering this problem in the context of corporate apologies for technological practice and initiatives such as data feminism that seek to transfer power from privileged groups to those at the margins of society.

Ensuring Equitable Outcomes from Automated Hiring Processes

These automated hiring and matching algorithms, implemented by major corporations such as LinkedIn, Amazon and others can be positioned in the wider context of automated processes, that use machine learning/AI algorithms, and support the infrastructure of society. These systems inevitably result in inequitable outcomes.